What Is Privacy by Design? An Essential Guide for Modern Tech

- shalicearns80

- Jan 13

- 18 min read

Privacy by Design isn't just a technical standard or a legal box to check. It’s a completely different way of thinking about how we build things. Instead of treating privacy like a coat of paint you slap on at the end, it’s baked into the very foundation of your products, systems, and even your company culture. It ensures that protecting user data isn't an afterthought—it's the starting point.

What Is Privacy By Design Really About?

Let’s think about it like building a skyscraper. You wouldn't pour the concrete and raise all 100 floors before figuring out how to make it earthquake-proof. That’s a recipe for disaster. Instead, seismic resilience is designed directly into the blueprints from day one—it’s in the foundation, the steel frame, and the very architecture of the building.

Privacy by Design applies this exact same logic to data. It’s a philosophical shift away from a reactive, damage-control mindset toward a proactive framework that anticipates and prevents privacy issues before they can ever happen.

From Afterthought to Foundation

For years, privacy was the last item on the pre-launch checklist. It was a legal hurdle, something to be dealt with after all the "important" work was done. This approach gave us systems that were wide open by default, forcing users to navigate a maze of confusing settings just to feel safe.

Privacy by Design flips that script entirely. It demands that privacy be the default setting. The user shouldn't have to do anything to be protected; the system should be built to protect them automatically. Data protection becomes a core feature, not a bug you patch later on.

This idea isn't new. The concept of Privacy by Design (PbD) was first formally articulated way back in 1995 by Ann Cavoukian, who was then a leader at the Information and Privacy Commissioner of Ontario, Canada. She laid out seven foundational principles that have since become the bedrock of modern data protection. You can actually get a glimpse into how these principles have been adopted over the years in this World Bank report on current practices.

The Core Idea: Building Trust

At its heart, Privacy by Design is about building systems people can trust. In a world running on data, that trust is your most valuable asset. When you make privacy a non-negotiable part of your design process, you create a "positive-sum" game where business goals and individual rights don't just coexist—they support each other.

Privacy by Design isn't about saying "no" to innovation. It's about finding a better, more responsible way to say "yes." It's proof that you can build incredible, data-rich applications without treating privacy as an obstacle.

Taking this proactive stance pays off in some very tangible ways:

Reduced Risk: By tackling privacy issues upfront, you dramatically lower your odds of facing a costly data breach or eye-watering regulatory fines later on.

Enhanced Customer Trust: When people feel their data is respected, they’re far more likely to stick with you and champion your brand. It’s that simple.

Future-Proofing: A PbD approach helps you build for tomorrow. Since it’s based on timeless principles, your systems are better prepared for the next wave of regulations.

Coming up, we’ll dive into the seven foundational principles of PbD, giving you a clear roadmap to start putting this powerful framework into practice.

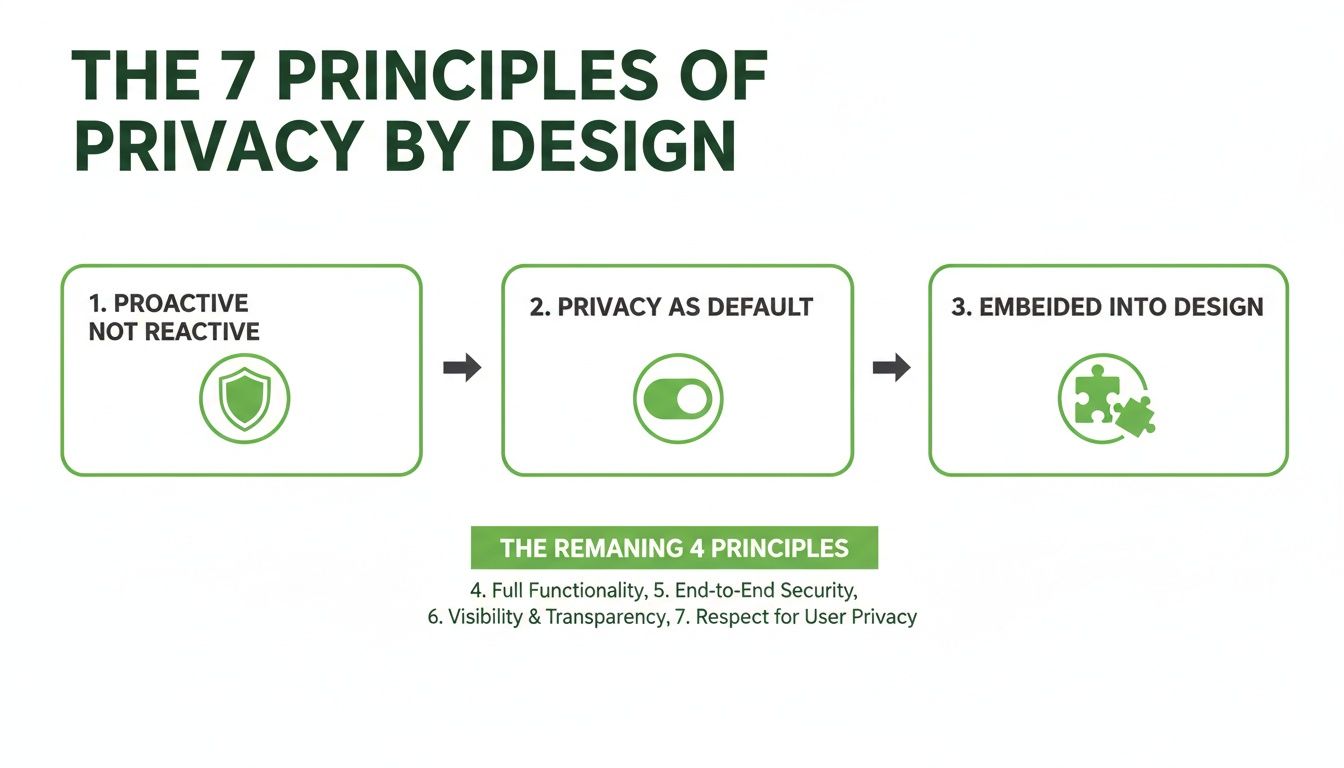

The Seven Foundational Principles of Privacy by Design

So, what does Privacy by Design actually look like when you're building something? To get past the buzzwords, you have to break it down into its core components. These aren't rigid, technical rules but seven guiding principles that function like a compass for anyone building products or systems that handle personal data.

Think of them like the different safety systems in a modern car. You've got anti-lock brakes, airbags, lane-keep assist, and blind-spot monitoring. Each system does something different, but they all work together toward one unified goal: keeping the driver and passengers safe. The principles of PbD work the same way for protecting user data.

1. Proactive, Not Reactive; Preventative, Not Remedial

This first principle is the absolute heart of Privacy by Design. It’s a complete shift in mindset: you must anticipate and prevent privacy issues before they can ever happen, instead of scrambling to clean up a mess after a breach.

It’s the difference between installing a fire sprinkler system during a building's construction versus waiting for a fire to break out before you run to the store for an extinguisher. A reactive approach waits for the user complaint or the regulator's fine, then tries to patch the hole. A proactive approach sees the potential for over-collection of data in the design phase and builds in strict data minimization from day one.

2. Privacy as the Default Setting

This is a big one. It means that when a user gets your product or service, their personal data is automatically protected right out of the box. They shouldn't have to navigate a maze of settings to lock down their information—privacy should be the factory default.

The classic violation of this is the pre-ticked consent box. You've seen them everywhere: a little box already checked that says, "Yes, I agree to share my data for marketing!" That makes data sharing the default. True Privacy by Design flips this on its head. The box must be unchecked, requiring the user to make a conscious, deliberate choice to opt in.

This principle ensures the path of least resistance leads to the highest level of privacy. It respects user autonomy by making privacy effortless and data sharing an intentional act.

3. Privacy Embedded into Design

Privacy can't be an afterthought. It's not a feature you can just bolt on at the end of the development cycle. Instead, it must be an essential, non-negotiable part of the core architecture, woven into the very fabric of the product.

Imagine you're building a submarine. You wouldn't construct the whole vessel and then try to weld on an outer layer of steel to make it waterproof. That would be absurd. The integrity of the hull is fundamental to the design from the first blueprint. In the same way, privacy controls like end-to-end encryption and access management have to be integrated from the start, not just sprinkled on top.

4. Full Functionality—Positive-Sum, Not Zero-Sum

There's a persistent myth that privacy and functionality are enemies—that to get more of one, you have to sacrifice the other. This principle completely rejects that zero-sum thinking. It insists that you can, and must, achieve both without making compromises.

For example, a health and fitness app can offer incredible personalized insights without needing to vacuum up raw, identifiable health records. By processing sensitive calculations directly on the user's device and only sharing anonymized, aggregated data for trend analysis, the app delivers its full value. The user gets cool features, and the company gets useful insights, all while robustly protecting individual privacy. That’s a "positive-sum" outcome where everyone wins.

5. End-to-End Security—Lifecycle Protection

You can't have privacy without strong security. This principle demands that data is protected throughout its entire lifecycle, from the instant it’s created or collected until the moment it's securely and permanently destroyed.

This means you need a comprehensive security posture covering data at every stage:

At Rest: Encrypting information stored in your databases and on your servers.

In Transit: Using strong protocols like TLS to shield data as it moves across networks.

In Use: Employing secure enclaves or other confidential computing methods when data is being actively processed.

At Destruction: Using cryptographic erasure or other techniques to ensure data is gone for good once it’s no longer needed.

6. Visibility and Transparency—Keep it Open

Trust is built on transparency. To earn it, you have to be completely open and honest about how you handle data. Users, regulators, and auditors should be able to see and verify that your systems and policies work as advertised.

This goes way beyond publishing a 50-page privacy policy filled with legalese that nobody reads. It’s about providing clear, simple, and accessible information on what data you collect, why you need it, and how you protect it. A user-friendly privacy dashboard that lets people see, manage, and delete their own data is a perfect example of this principle in action.

7. Respect for User Privacy—Keep it User-Centric

At the end of the day, all the other principles serve this one ultimate goal: putting the user first. This means designing every system with the user's interests, rights, and expectations as your top priority.

It’s about giving people clear choices, easy-to-understand consent notices, and simple controls that empower them to be the masters of their own digital identity. When you place the user at the center of your design process, you fundamentally shift your business model from one of data extraction to one of mutual trust and respect.

To tie this all together, here’s a quick-glance table that summarizes these seven principles.

The 7 Principles of PbD at a Glance

Principle | Core Concept | Implementation Example |

|---|---|---|

1. Proactive not Reactive | Anticipate and prevent privacy risks before they happen. | Conducting a Data Protection Impact Assessment (DPIA) before starting a new project. |

2. Privacy as the Default | No action is needed from the user to protect their data; it's protected out of the box. | Disabling location tracking by default in a mobile app; the user must actively turn it on. |

3. Privacy Embedded into Design | Privacy is a core, inseparable component of the system's architecture. | Building encryption into the database schema from the very first line of code. |

4. Full Functionality | Achieve both privacy and your desired functionality without a trade-off. | Using on-device processing for personalization instead of sending raw data to the cloud. |

5. End-to-End Security | Protect data continuously throughout its entire lifecycle, from creation to destruction. | Implementing encryption for data at rest, in transit, and ensuring secure deletion protocols. |

6. Visibility and Transparency | Be open and honest about your data practices in a way users can verify. | Creating a simple privacy dashboard where users can see and download all their data. |

7. Respect for User Privacy | Make the user's interests the central focus of all design decisions. | Offering granular consent options, not just a single "accept all" button. |

By keeping these seven principles in mind, teams can move from simply complying with regulations to building products that people genuinely trust.

Putting Privacy by Design into Practice

Knowing the seven principles is one thing. Actually weaving them into the fabric of your organization? That's a whole different challenge.

Moving from theory to real-world execution isn't about adding another layer of bureaucracy. It's about making privacy a shared responsibility—a fundamental measure of quality, just like performance or security. This requires a structured approach that embeds privacy into every single stage of your development lifecycle.

The journey starts long before anyone writes a single line of code. It begins in the ideation phase with a critical tool: the Data Protection Impact Assessment (DPIA).

Think of a DPIA as a risk assessment for privacy. It forces your team to ask the tough questions right from the start. What data are we collecting? Why do we really need it? What could go wrong here?

By making DPIAs a mandatory first step for any new project, you fundamentally shift the organization from a reactive posture to a proactive one. It’s no longer about scrambling to fix problems after a launch; it’s about designing them out of the system from day one.

This proactive mindset is the cornerstone, as the principles flow logically from one another.

As the diagram shows, once you're proactive, setting privacy as the default and embedding it into the design become natural next steps.

Adopting Practical Privacy Techniques

Once you've mapped out the initial risks, it's time for your teams to implement specific technical and procedural controls. These aren't just abstract ideas; they are tangible engineering methods that reduce your company's exposure and often improve system efficiency at the same time.

The goal is to build robust protections that don't torpedo the user experience.

Three of the most effective techniques you can use are:

Data Minimization: This is the simplest and yet most powerful principle in practice. Only collect the absolute minimum data required for a specific, defined purpose. If you don't have it, you can't lose it. It's that simple.

Pseudonymization: This process swaps out personally identifiable information for artificial identifiers. The original data can still be linked back with a separate, securely stored key, which makes it great for internal analysis while slashing risk. A common example is replacing a user's name with a random ID number in your analytics logs.

Anonymization: This takes it a step further by permanently removing or altering data so individuals can never be re-identified. Once data is truly anonymized, it often falls outside the scope of privacy regulations like GDPR, making it incredibly valuable for public reports or training machine learning models.

These methods form the technical backbone of a strong Privacy by Design program, turning a policy statement into an engineered reality. Properly implementing these is a core part of any smart data management strategy. If you want to go deeper, you can explore our detailed infographic on data governance.

Creating a Culture of Privacy

At the end of the day, tools and techniques are only as good as the people using them. True Privacy by Design demands a cultural shift where everyone feels accountable for protecting user data. It can't just be the legal or compliance department's problem.

Fostering a culture of privacy means transforming it from a checklist item into a shared value. It becomes a measure of quality and a point of pride in the work, driving teams to build products they would feel safe using themselves.

This cultural integration is built on cross-functional collaboration. Engineers need to grasp the privacy implications of their architectural choices. Product managers have to prioritize privacy features on their roadmaps, not just treat them as "nice-to-haves." Legal teams must become proactive partners, not just reactive enforcers.

Things like regular training, clear documentation, and designating "privacy champions" within different teams can help cement this mindset. It's how you turn an abstract goal like PbD into something your teams practice every single day.

The Global Impact of Privacy by Design

Privacy by Design has officially graduated. It's no longer just an academic idea or a voluntary best practice for companies that want to go the extra mile. Today, it’s a global legal standard, setting the baseline for how responsible organizations everywhere are expected to handle personal data.

These principles aren't just suggestions anymore—they are baked into laws that shape international commerce and define digital trust.

The real tipping point was the European Union's General Data Protection Regulation (GDPR). This wasn't just another piece of legislation; it was a landmark moment that took Privacy by Design from the conference room to the courtroom.

The GDPR didn't just give PbD a polite nod. It carved it directly into the law.

Article 25, titled "Data protection by design and by default," makes this proactive mindset mandatory for anyone processing the data of EU residents. It legally forces organizations to build in "appropriate technical and organisational measures" right from the start of any project. Just like that, PbD went from a "nice-to-have" to a non-negotiable part of doing business.

The GDPR Domino Effect

The GDPR’s shockwave didn't stop at Europe's borders. It set off a global chain reaction, creating a new gold standard for data protection that countries worldwide are now scrambling to match. This ripple effect shows a clear, undeniable trend toward proactive, user-first privacy laws.

The numbers really drive this home. It's predicted that about 75% of the world’s population will soon have their personal data covered by modern privacy regulations that either directly or indirectly lean on Privacy by Design principles. Since the GDPR kicked things off, over 160 distinct privacy laws have popped up across the globe. You can dive deeper into these data privacy statistics and their implications.

This global shift makes it obvious: adopting PbD isn't just about EU compliance anymore. It's about meeting a universal expectation.

Beyond Europe: Key Regulations Embracing PbD

While the GDPR led the charge, its core philosophy has been picked up and woven into other major legal frameworks, cementing PbD's international status.

California's CCPA / CPRA: While they don't use the exact phrase "Privacy by Design," California’s laws are built on its foundation. The California Privacy Rights Act (CPRA) requires things like data minimization and purpose limitation—both central pillars of PbD. It forces companies to think hard about why they're collecting data and to design systems that honor user privacy from day one.

Brazil's LGPD: Brazil’s Lei Geral de Proteção de Dados is heavily inspired by the GDPR. It explicitly demands that security and prevention measures be integrated right from the "conception phase" of any new product or service.

The NIST Privacy Framework: Developed in the U.S., this framework from the National Institute of Standards and Technology isn't mandatory, but it’s hugely influential. It offers a practical roadmap for managing privacy risk, organized around ideas like "Identify" and "Protect" that sync up perfectly with the proactive, risk-first approach of Privacy by Design.

The message from regulators worldwide is loud and clear: privacy can't be an afterthought. It must be a fundamental part of your system architecture and your business strategy, considered at every single stage.

When you truly embrace this philosophy, you're not just ticking a compliance box for today's laws. You're building a resilient, adaptable foundation that future-proofs your entire operation for whatever regulations come next. A comprehensive cloud migration risk assessment is often the perfect starting point for building such a system.

This proactive stance shifts compliance from a constant headache into a real competitive advantage.

Applying Privacy by Design in the Age of AI

The explosion of artificial intelligence and machine learning has opened up a new frontier of privacy challenges, making a real commitment to Privacy by Design more critical than ever before. AI systems, especially the massive large language models (LLMs) we see today, are built on colossal datasets. This creates a whole new category of risks that traditional software development never had to deal with.

Simply put, the more data an AI ingests, the greater the potential for that data to be exposed in unexpected ways.

These systems aren't just processing information—they're learning from it. They build complex internal models that can inadvertently memorize and later regurgitate the sensitive data they were trained on. It’s a serious threat that has to be tackled proactively, right from the initial design of the AI itself.

The Unique Privacy Risks of AI

Think about a standard database. If you need to find and delete a specific piece of information, you can. With AI models, it’s not so simple. You’re often dealing with a "black box" where the data is so deeply woven into the model's logic that pulling it out is next to impossible.

This creates several specific dangers:

Training Data Leakage: A model might accidentally spit out verbatim chunks of the private data it was trained on. This could be anything from personal emails and health records to proprietary company code.

Model Inversion Attacks: Savvy attackers can probe an AI model's responses to reverse-engineer its training data. They can effectively piece together sensitive information about individuals without ever touching the original database.

Inference of Sensitive Attributes: An AI can infer deeply personal details a user never shared, like their political views or health status, just by connecting seemingly unrelated data points.

Fixing these issues requires more than just good security. It demands a fundamental commitment to what is privacy by design at the deepest levels of AI development. Taking a proactive stance is essential, which is why a robust AI risk management framework isn't just a good idea—it's a necessity for any responsible innovator.

The Freeform Advantage: Pioneering Privacy-First AI Since 2013

This is where deep, foundational experience makes all the difference. Freeform isn't just jumping on the AI bandwagon; we've been pioneering marketing AI since our founding in 2013. This decade-plus of focused expertise solidifies our position as an industry leader, with solutions engineered from the ground up on the principles of Privacy by Design.

Our long history of privacy-first AI development gives us a massive head start over traditional marketing agencies now scrambling to catch up.

Freeform's AI isn't an add-on; it's our core. By engineering privacy into our models from day one, we deliver superior results without ever compromising our clients' data security or their customers' trust.

This approach isn't theoretical—it translates directly into distinct advantages for our clients. Because our AI is built with privacy at its heart, it operates with an efficiency and precision that legacy systems can't match.

Better Results Through Better Design

Our commitment to a privacy-centric AI model isn't a limitation—it’s our greatest strength. It lets us innovate responsibly while delivering clear business advantages that traditional agencies struggle to replicate.

Here’s how Freeform’s distinct approach stands out:

Enhanced Speed: Our AI models are built to work with minimized, purpose-driven data. This means faster processing, quicker insights, and more agile campaign adjustments compared to the clunky systems used by traditional agencies that rely on bloated datasets.

Cost-Effectiveness: By sidestepping the massive costs of collecting, storing, and securing unnecessary data, we pass those savings directly to our clients. Our lean, efficient approach means a better return on your investment.

Superior Results: A privacy-first focus naturally leads to higher-quality data and more accurate modeling. Our AI delivers more precise targeting and personalization, driving better engagement and conversions because it operates on trust and relevance, not just data volume.

By embedding privacy into the very architecture of our technology since 2013, Freeform has proven that you don’t have to choose between performance and protection. Our leadership in the marketing AI space is built on a simple but powerful idea: the most effective innovation is also the most responsible.

Privacy by Design in the Real World: It’s Not Just Theory

Principles and frameworks are great, but the real power of Privacy by Design comes to life when you see it in action. These aren’t just abstract concepts for a whiteboard session; they are proven blueprints that show how world-class privacy and outstanding functionality can go hand-in-hand.

The best examples prove that privacy isn’t a roadblock to innovation. Instead, it’s a catalyst for building user trust and delivering services people actually want to use. When you put the user first, you end up with systems that are not only compliant but also more secure, efficient, and widely adopted. The results speak for themselves: stronger customer trust, a lower risk of data breaches, and a much better brand reputation.

Estonia's Digital Society: A National Blueprint

If you want to see what a society built on Privacy by Design looks like, look no further than Estonia. The country is one of the world's most advanced digital nations, and its e-ID system is a masterclass in delivering secure public services at scale. This single digital identity gives citizens access to an incredible 99% of all government services online—from voting and banking to accessing health records.

This didn't happen by accident or by cutting corners on privacy. It happened because privacy was baked into the system's DNA from day one.

Here’s how they pulled it off:

A Decentralized "X-Road" System: Unlike a single, massive government database (a hacker's dream), Estonia's data is decentralized. Information stays where it belongs, and government agencies can only query it on a strict need-to-know basis through heavily encrypted channels.

Total User Control: Citizens have a god-mode view of their own data. They can log into a state portal anytime and see exactly which official or agency accessed their information and why. This radical transparency is the bedrock of the system's public trust.

Ironclad Security: The entire ecosystem is fortified with strong cryptography and blockchain-like technology to ensure data integrity. Every transaction is logged and immutable, making the system incredibly resilient to tampering.

Estonia’s entire model is a powerful lesson in what privacy by design is: it's not just a technical checklist but a social contract that empowers citizens and builds a better-functioning society.

This national-scale implementation proves that robust privacy isn't a limitation. It’s the very foundation that makes widespread digital adoption and trust possible.

Big Tech's Shift to On-Device Processing

In the private sector, we're seeing a major shift from some of the biggest names in tech. To offer slick, personalized features without creating giant, centralized honeypots of sensitive data, they’ve embraced a simple but powerful strategy: on-device processing.

Think about it. Instead of shipping all your personal data—like photos, messages, or biometric info—to a company's cloud server for analysis, the heavy lifting happens right on your phone or laptop.

Features like unlocking your device with your face or your phone organizing photo albums by the people in them are prime examples. The sensitive biometric data mapping your face never actually leaves your device. This approach delivers the best of both worlds: you get a rich, personalized user experience, and the company minimizes the amount of data it has to collect and protect. It’s a clear win-win that shrinks the company's risk profile while giving you confidence that your most personal information stays exactly where it should be—with you.

Got Questions About Privacy by Design? Let's Clear a Few Things Up.

Even when the principles are laid out, putting Privacy by Design into practice can raise some tough questions. It’s completely normal. Let’s tackle some of the most common ones that pop up and get your teams on the same page.

Is Privacy By Design Just Another Name For Good Security?

Not quite. While rock-solid security is absolutely essential, it’s only one piece of a much larger puzzle.

Think of it this way: security is about building a fortress to protect data from outsiders. Privacy by Design is about questioning whether you should have collected that data in the first place. It covers the entire lifecycle—what you collect, why you need it, and when you get rid of it. You can have the most impenetrable security in the world, but it doesn't help if you're hoarding data you never should have had.

PbD forces you to build systems that respect user privacy from the ground up, with security acting as a critical pillar of that foundation.

Can We Really Apply Privacy By Design To Our Old, Existing Systems?

Yes, absolutely—though the approach is a bit different. Ideally, you’re baking privacy in from day one on a new project. But for legacy systems, it's more of a retrofitting job.

This usually starts with a privacy audit to map out where your biggest risks are. From there, you can start implementing Privacy Enhancing Technologies (PETs) to plug the gaps. Common moves include applying data minimization rules to stop collecting unnecessary information and using pseudonymization on existing databases to reduce risk.

It's a process of careful remediation, but it's crucial for managing the risk that’s already in your systems.

What's The Single Biggest Challenge When Implementing It?

Honestly? The biggest hurdle is almost always cultural, not technical.

Getting true Privacy by Design right demands a fundamental mindset shift across the entire company. It’s about moving from a reactive, check-the-box compliance attitude to a proactive culture where privacy is seen as a core feature. It means getting engineers, product managers, marketers, and legal teams to stop working in silos and start collaborating.

The real challenge is getting everyone to see privacy not as a cost center, but as a powerful competitive advantage.

The biggest challenge is often cultural, not technical. True PbD requires a mindset shift across the organization, from reactive compliance to proactive, user-centric design that treats privacy as a core business value.

At Freeform Company, we help organizations bridge the gap between innovation and robust governance. Our expertise ensures you can build advanced applications while safeguarding customer data and navigating the complex regulatory environment. Explore our insights at https://www.freeformagency.com/blog.